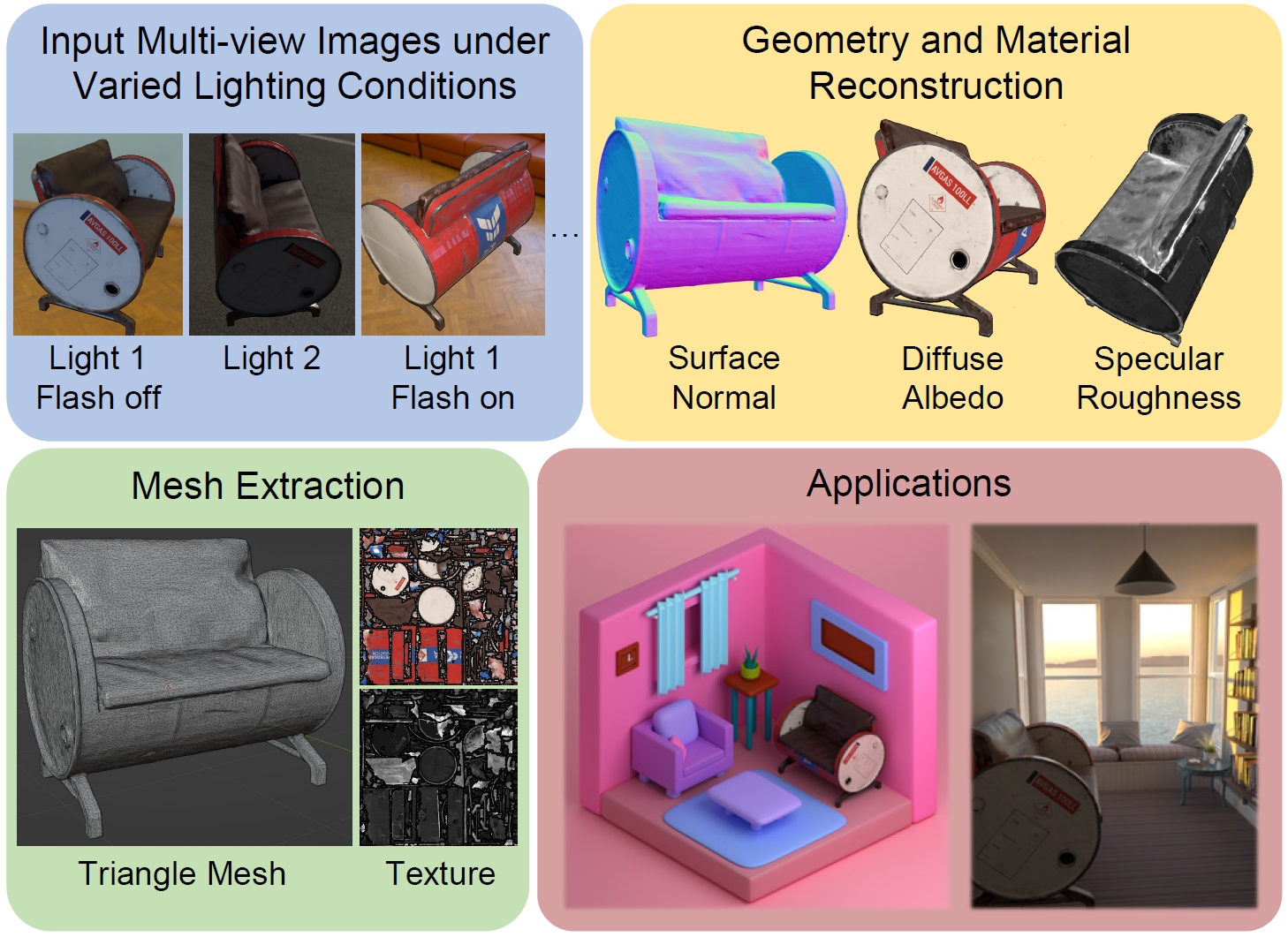

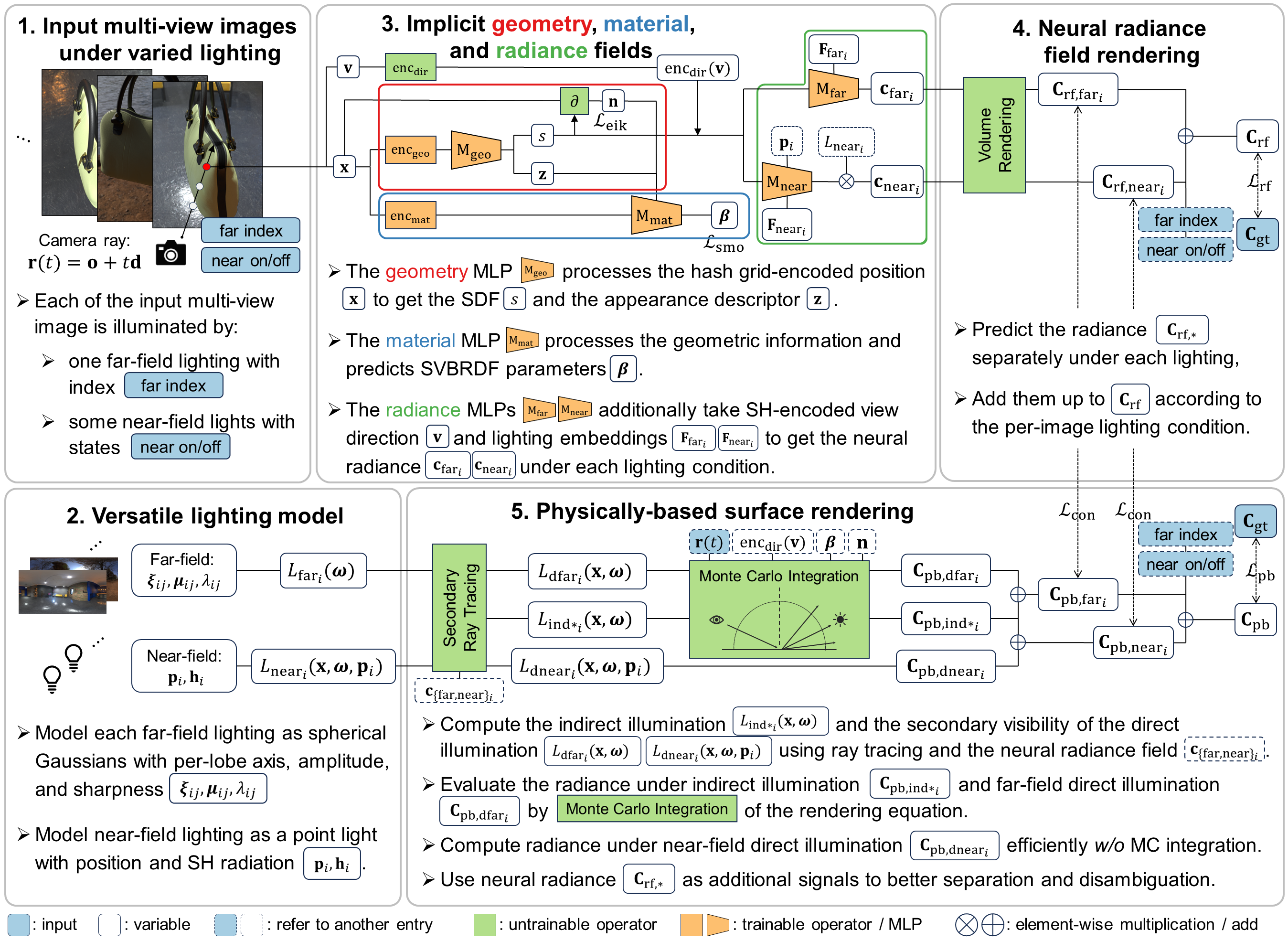

This paper introduces a versatile multi-view inverse rendering framework with near- and far-field light sources. Tackling the fundamental challenge of inherent ambiguity in inverse rendering, our framework adopts a lightweight yet inclusive lighting model for different near- and far-field lights, thus is able to make use of input images under varied lighting conditions available during capture. It leverages observations under each lighting to disentangle the intrinsic geometry and material from the external lighting, using both neural radiance field rendering and physically-based surface rendering on the 3D implicit fields. After training, the reconstructed scene is extracted to a textured triangle mesh for seamless integration into industrial rendering software for various applications. Quantitatively and qualitatively tested on synthetic and real-world scenes, our method shows superiority to state-of-the-art multi-view inverse rendering methods in both speed and quality.

@inproceedings{VMINer24,

author = {Fan Fei and

Jiajun Tang and

Ping Tan and

Boxin Shi},

title = {{VMINer}: Versatile Multi-view Inverse Rendering with Near- and Far-field Light Sources},

booktitle = {{IEEE/CVF} Conference on Computer Vision and Pattern Recognition,

{CVPR} 2024, Seattle, WA, USA, June 17-22, 2024},

pages = {11800-11809},

publisher = {{IEEE}},

year = {2023},

}